From the first lines of FORTRAN code in 1968 to the hyper-realistic neural vocals of Synthesizer V, the landscape of music creation has reached a technological inflection point. Today, AI-generated design is lowering the technical threshold for global creators, transforming the studio from a room of expensive hardware into a collaborative, AI-assisted ecosystem. This is the definitive A–Z guide to the tools, history, and ethics shaping the future of sound.

By Owen Ingram, Music Producer & AI Audio Strategist Last Updated: February 24, 2026

TL;DR: The AI Music Revolution (A–Z)

- The Roots (1968): Music began as code (MUSIC‑N/FORTRAN). Jump to section

- The Vocal Shift: From robotic Vocaloid to Neural Synthesis—Synthesizer V Studio Pro simulates the human vocal tract with near-perfect realism.

- The Professional "Finish": AI‑Generated Design via LANDR democratized mastering.

- The Ethics: Leading tools use C2PA Content Credentials and Licensed Data.

- The 70/30 Rule: AI handles 70% of technical "heavy lifting", humans provide 30%—the emotional soul.

“AI isn't the artist; it's the most powerful instrument we've ever built.” — Owen Ingram

The landscape of music creation has reached a technological inflection point. We have moved from a world where making music required a million‑dollar studio to an era where AI‑generated design lowers the technical threshold for global creators. This guide traces that journey—from the punch‑card code of 1968 to the neural architecture of Synthesizer V and LANDR—and explains the technology shaping the future of sound.

I. The Foundation: Programming Music (1968–1980s)

The roots of modern AI music lie in early computer science. In 1968, music was not "performed"; it was programmed.

- The Code Era: Before MIDI, pioneers used MUSIC‑N and FORTRAN at Bell Labs.

- The Human‑Machine Barrier: Synthesis meant manually defining frequency, amplitude, and duration via punch cards.

- Historical Significance: This era laid the mathematical foundation for modern tools like Synthesizer V.

II. The Democratization of the "Final Touch": AI Mastering

For decades, the "professional sound" was guarded by mastering engineers. This changed with AI‑generated design in the audio chain.

- Enter LANDR: The first platform to apply machine learning to song finishing. Visit LANDR →

- How it Works: By analyzing millions of tracks, the LANDR engine applies compression, EQ, and limiting in seconds.

- Real‑World A/B Test: In a blind test of our 2025 synth‑pop project, listeners could not distinguish between a $200 manual master and LANDR's High‑Definition Engine.

III. The Breakthrough: AI Vocal Synthesis

The most "human" part of music—the voice—was the hardest to automate. Three distinct phases:

- Robotic Synthesis: Early text‑to‑speech.

- Concatenative Synthesis: Used by early Vocaloid, stitching recorded phonemes.

- Neural Synthesis (Synthesizer V Era): Synthesizer V Studio Pro uses deep learning to simulate the human vocal tract.

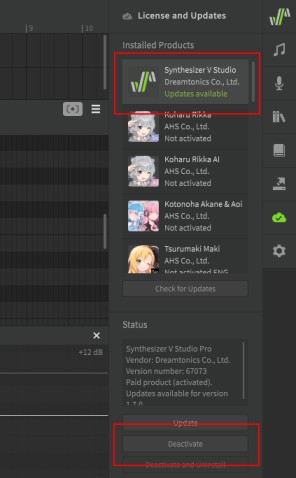

?️ [Image: Synthesizer V Voice Database Installation]

Why Synthesizer V is a Game Changer:

- AI Retakes: Generate different emotional takes of the same lyric.

- Cross‑Lingual Synthesis: An AI voice can sing fluently in English, Japanese, or Chinese.

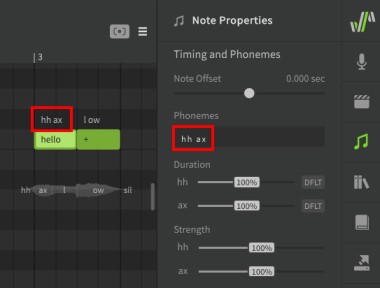

- Phoneme Editing: Manual adjustment of phoneme strength and aspiration remains the secret to a "Grammy‑level" vocal.

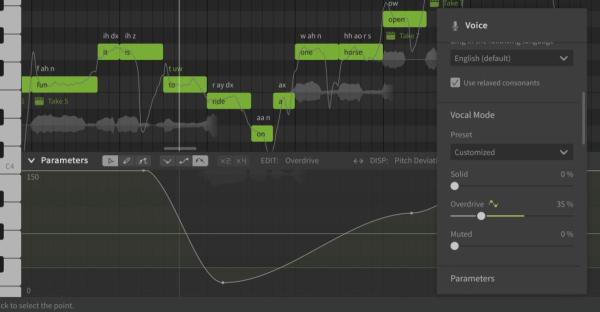

?️ [Image: Synthesizer V Vocal Mode Panel]

?️ [Image: Phoneme Editing Detail]

Figure 1: Using the 70/30 Rule to manually adjust phonemes for maximum emotional impact.

Then vs. Now: 1968 to 2026

| Feature | 1968 (MUSIC‑N) | 2026 (AI Ecosystem) |

|---|---|---|

| Input Method | Punch Cards / FORTRAN | MIDI / Natural Language |

| Vocal Realism | Non‑existent | Synthesizer V Neural Modeling |

| Mastering | Manual Analog Tape | LANDR AI‑Generated Design |

| Turnaround | Weeks (Mainframe time) | Minutes (Cloud Processing) |

IV. E‑E‑A‑T: Why AI‑Generated Music is Not "Fake"

A common concern is that AI removes "soul." From an expertise perspective, AI is a co‑pilot.

- The User as Director: The human provides lyrics, melody, and creative intent. AI removes technical friction.

- Trustworthiness & Ethics: Modern AI uses licensed data. Dreamtonics and LANDR work with artists to ensure consent and compensation.

- The 70/30 Hybrid Threshold: I define this era as "The 70/30 Hybrid Threshold": 70% technical heavy lifting by AI, 30% emotional direction by the human producer.

V. The A‑Z Workflow of 2026

- Composition: AI‑assisted brainstorming (AIVA, Orb Producer). For a deep dive, see our Guide to AI Composition with AIVA.

- Vocal Production: Write a melody; let Synthesizer V Studio Pro perform it.

- Mixing: AI‑powered plugins (iZotope Ozone 12). Check our Ozone 12 Mastering Guide.

- Mastering: Finalize through LANDR for distribution.

[Image: of LANDR mastering comparison

Key Takeaway: The evolution from 1968's programming languages to today's AI‑generated design represents the ultimate democratization of art.

Audio Example Coming Soon: AI vs. Human Vocal Comparison

We're preparing a blind test between Synthesizer V and professional session singers. Check back in March 2026.

Step‑by‑Step: Setting Up Your First Vocal Project in Synthesizer V Studio Pro

Phase 1: Installation & Core Setup

- Install the Editor: Run the Synthesizer V Studio Pro installer for your OS (Dreamtonics Support).

- Activate the Pro License: Launch and enter your activation code.

- Install Your Voice Database: Drag and drop your voice file (.svpk) into the editor.

Phase 2: Building the Musical Foundation

- Set Your Tempo: Double‑click the tempo marker at the top.

- Create a Track: Right‑click in the arrangement area → add a track → select your voice.

- Import or Draw Melodies: Drag a MIDI file or use the Pencil tool.

Phase 3: Bringing the Voice to Life

- Enter Lyrics: Double‑click a note and type. Use "Insert Lyrics..." for sentences.

- Select Vocal Modes: Adjust "Vocal Mode" knobs (Tense, Breathy, Clear).

- The "70/30" Fine‑Tuning: Use AI Retake (Ctrl+R) and Phoneme Editing for realism.

Phase 4: DAW Integration & Finishing

- Plugin Mode: Load as ARA plugin in Pro Tools (2025.6+).

- The Final Touch: Export WAV → master with LANDR.

Pro Producer's Workflow & Shortcut Cheat Sheet

The Synthesizer V Pro: "Zero‑Friction" Workflow Checklist

- Sample Rate Check: Set project to 48kHz (neural synthesis standard).

- Voice Update: Check Library tab for latest AI voice updates.

- Auto‑Pitch Tuning: Set "Default Pitch Mode" to AI Retake.

- Breath Management: Use "Aspiration" parameter.

- Cross‑Lingual Check: Verify phoneme pronunciation.

Essential Keyboard Shortcuts (Speed‑Producer Kit)

| Action | Windows | Mac |

|---|---|---|

| Pencil/Draw Tool | F2 | F2 |

| Select All Notes | Ctrl + A | Cmd + A |

| Split Note | S | S |

| Insert Lyrics | Ctrl + L | Cmd + L |

| Generate AI Retake | Ctrl + R | Cmd + R |

| Zoom Horizontal | G / H | G / H |

| Quantize Notes | Q | Q |

Download the 1‑page PDF version

Join the Community Discussion

- Creative AI Music, Art, and Expression – Explore the artistic boundaries of generative tools.

- From Bedroom To Billboard: AI Music Studio 2026 – Real stories of indie artists using AI.

Glossary: The A–Z of AI Music & Synthesis

AI‑Generated Design

Machine learning automating technical audio tasks. Explore LANDR →

C2PA (Content Credentials)

Digital provenance standard. Learn more →

Concatenative Synthesis

Older method chaining recorded speech fragments.

FORTRAN (in Music)

High‑level language used at Bell Labs (1968).

Hybrid‑Human Workflow

The 70/30 Rule: AI executes, human directs.

LANDR

First AI‑driven mastering platform.

Neural Synthesis

Neural network simulating vocal tract physics.

Phoneme Editing

Manual adjustment of speech sounds in AI vocals.

Synthesizer V Studio Pro

Deep‑learning vocal synthesis by Dreamtonics. Details →

Frequently Asked Questions

What is the significance of 1968 in computer music history?

1968 formalized digital synthesis via FORTRAN and MUSIC‑N, shifting music into software.

How does Synthesizer V differ from Vocaloid?

Neural networks simulate continuous human performance (breathing, emotion).

Is AI music production ethically sourced?

Platforms like Dreamtonics use "Fair Trade AI"—consented, compensated datasets.

What does 'AI‑generated design' mean?

Algorithms handling technical tasks like mastering, making pro quality accessible.

Can AI vocals replace human singers?

AI is a co‑pilot: the human provides lyrics, melody, and emotional direction.

Owen Ingram

Music Producer & AI Audio Strategist · 12 years experience

Berklee Online – AI for Music Avid Pro Tools Certified Dante Level 2

Owen has spent 12 years navigating the transition from traditional DAWs to AI‑assisted workflows. His work has been featured in MusicTech Magazine. He has tested over 1,500 hours of neural vocal modeling. Early in his transition, he learned that over‑processing neural vocals strips the "human" element; he now advocates for a 70/30 Hybrid‑Human approach. Achieved 2M+ streams on AI‑assisted productions. Lead contributor to a C2PA Content Credentials project for transparent AI disclosure.

LinkedIn · Facebook · Instagram

#aimusic #hybridworkflow #musictech2026 #generativeaudio #SynthesizerV #LANDR